As generative AI and traditional machine learning become embedded into day-to-day business processes, infrastructure demands are shifting. These workloads require sustained compute density and continuous power delivery, placing new pressure on data center environments that were not designed for this level of intensity.

To better understand how organizations are responding, Connection partnered with Foundry to survey 108 senior IT, operations, and procurement decision-makers about AI adoption, power impact, planning rigor, and sustainability priorities. The results show that AI’s effect on power infrastructure is both immediate and accelerating with maturity.

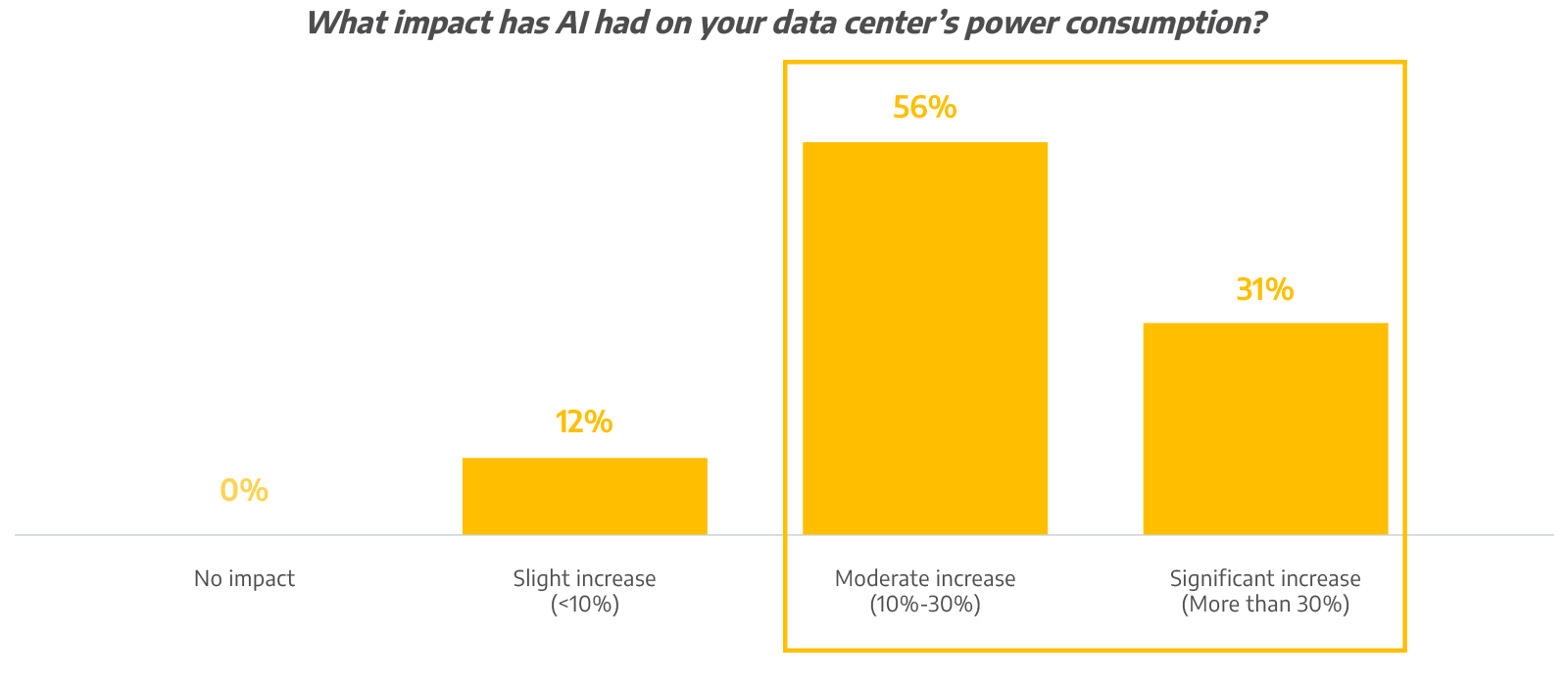

AI Is Rapidly Increasing Power Demand

The impact of AI on energy consumption is already measurable, with 87% of respondents saying AI workloads have moderately or significantly increased data center power consumption.The increase is even more pronounced among mature adopters. Notably, 54% of organizations with fully integrated AI report a significant power impact, compared to just 17% of those in earlier stages of deployment. As AI maturity increases, so does sustained energy demand.

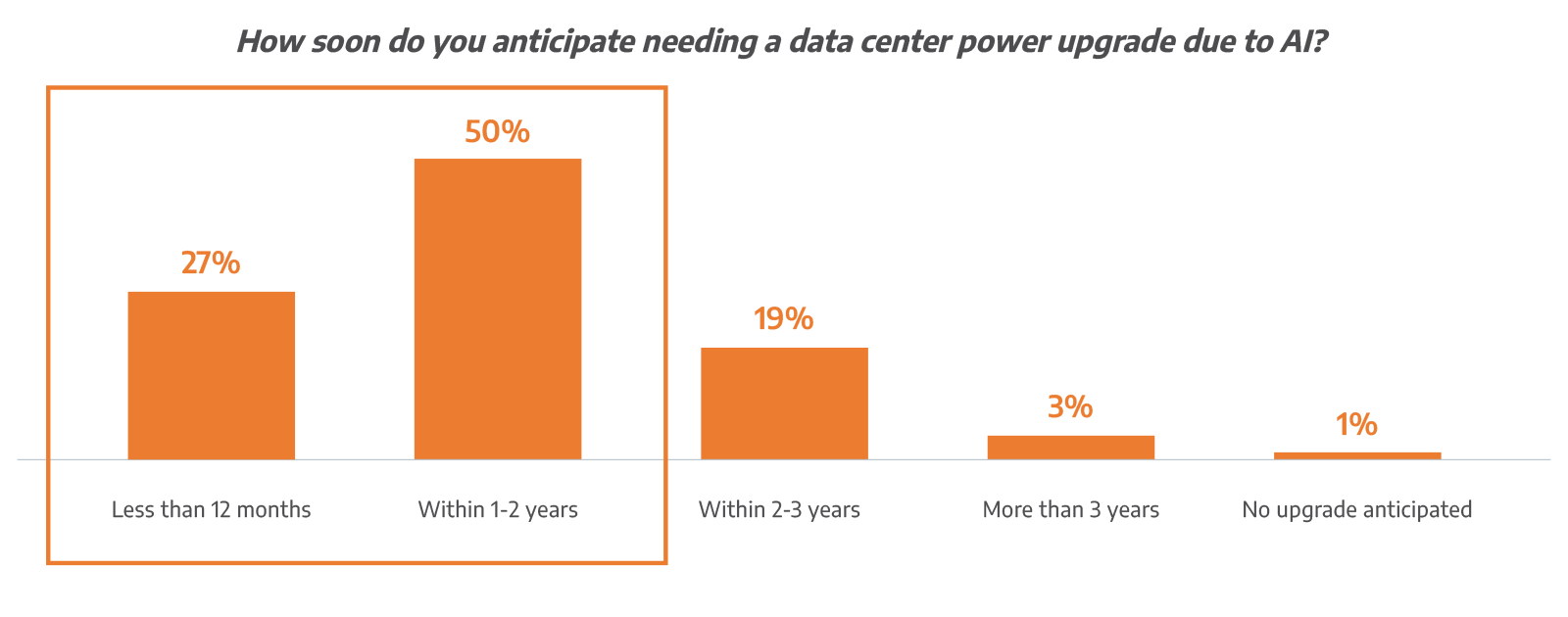

Most enterprises recognize that current infrastructure may not be sufficient for long-term growth. Roughly 77% anticipate that a data center power upgrade will be necessary within the next two years to support AI initiatives. Among mature AI adopters, urgency accelerates further, with more than half expecting upgrades within the next 12 months.

Power is to AI what data is to intelligence—it is foundational. As AI moves into mission-critical environments, stable and resilient power infrastructure is not optional or secondary. Organizations in industries like healthcare and financial services cannot afford downtime. AI strategy must account for infrastructure readiness from the start.

Planning Rigor Increases with AI Maturity

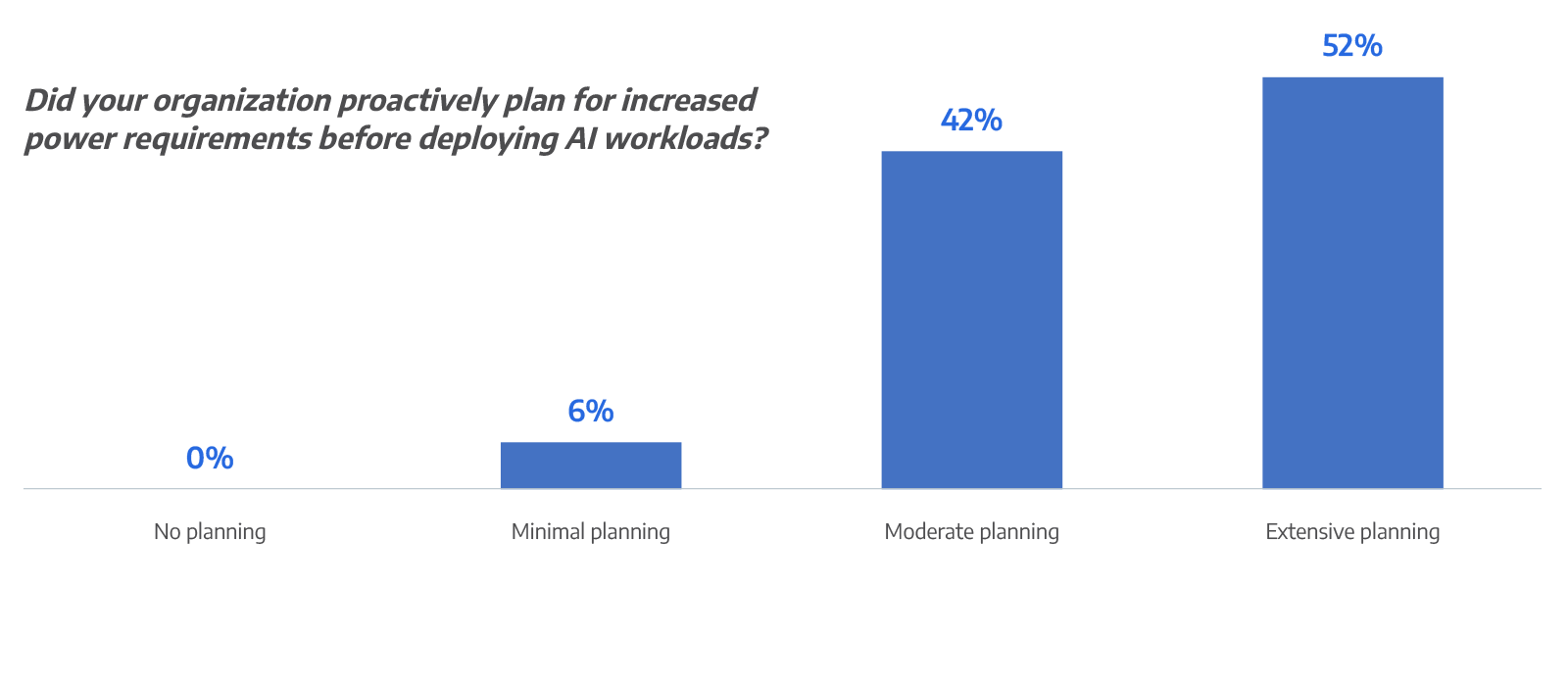

Proactive planning is an emerging differentiator.More than half of respondents report completing extensive power planning before deploying AI workloads, while another 42% say they conducted moderate planning. Only a small minority entered deployment with minimal preparation.

Organizations further along in AI maturity are significantly more likely to have invested in detailed forecasting. This matters because AI workloads introduce sustained, high-density power profiles that differ from traditional enterprise applications.

Confidence in forecasting tools also reflects this shift. A majority (53%) of respondents rate their current power forecasting tools as very effective, and more than one-third consider them effective. Effectiveness ratings are highest among mature AI organizations, reinforcing that accurate modeling becomes essential as environments scale.

Scaling Power for AI Comes with Real Constraints

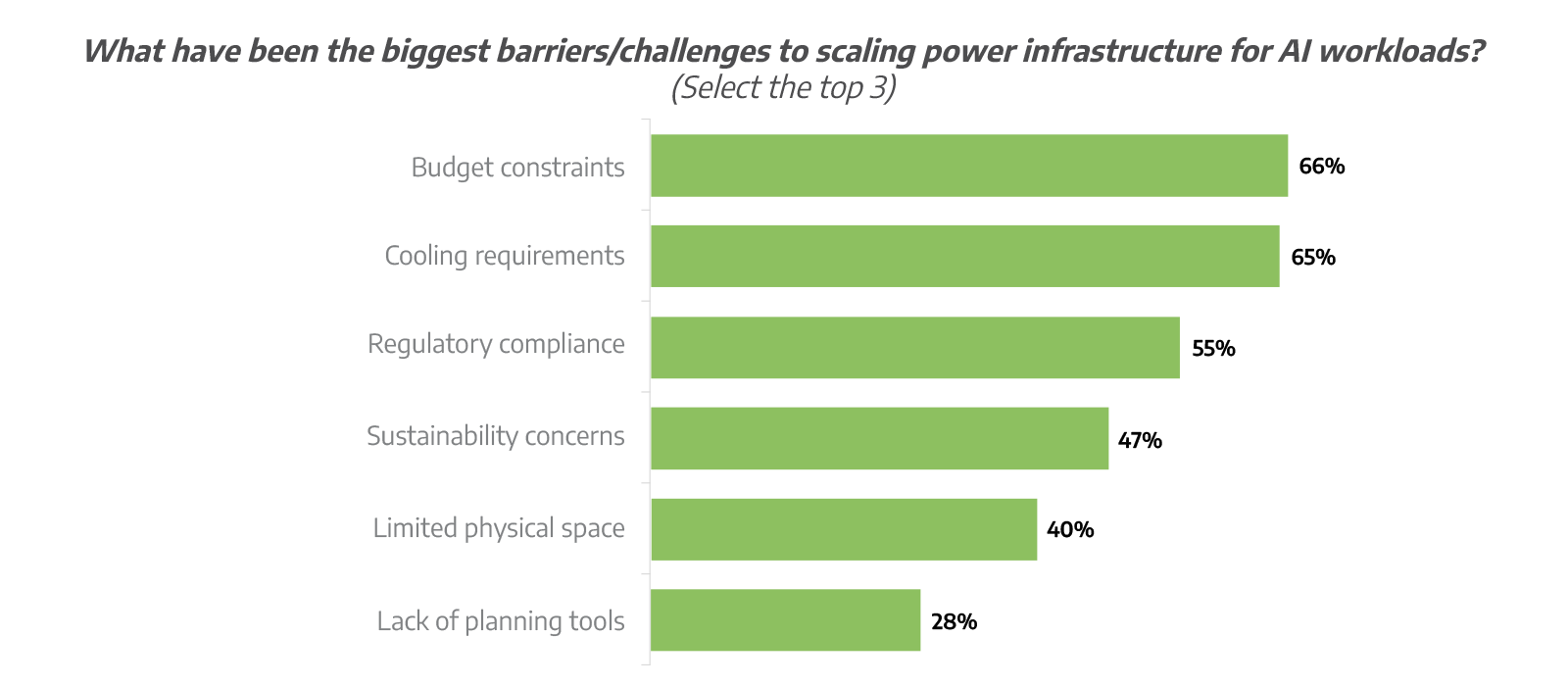

While urgency is high, expanding infrastructure presents real barriers.Two-thirds of respondents (66%) cite budget constraints as a primary obstacle when scaling power capacity. Cooling requirements follow closely (65%), and more than half (55%) cite regulatory compliance pressures.

As rack density increases and power loads intensify, cooling strategies must evolve to support the load. Regulatory requirements tied to energy usage and sustainability reporting add another layer of complexity. Organizations with higher AI maturity are also more likely to report challenges related to planning tools, signaling growing sophistication and operational demands.

Connection’s study highlights rapid AI deployment at the enterprise level and the resulting power density challenges. I believe power and cooling needs will continue to rise, further straining infrastructure. Adopting new solutions is essential for reliability, performance, cost efficiency, and future-proofing enterprise AI deployments.

When AI Scales, Efficiency Overtakes Reliability

Reliability remains foundational in data center planning, but priorities are evolving as AI scales.When asked to rank power-infrastructure priorities for AI workloads, reliability (28%) and energy efficiency (22%) emerge as top priorities. Among organizations actively scaling AI, energy efficiency surpasses reliability as the number one priority. This reflects growing pressure to manage sustained power consumption responsibly.

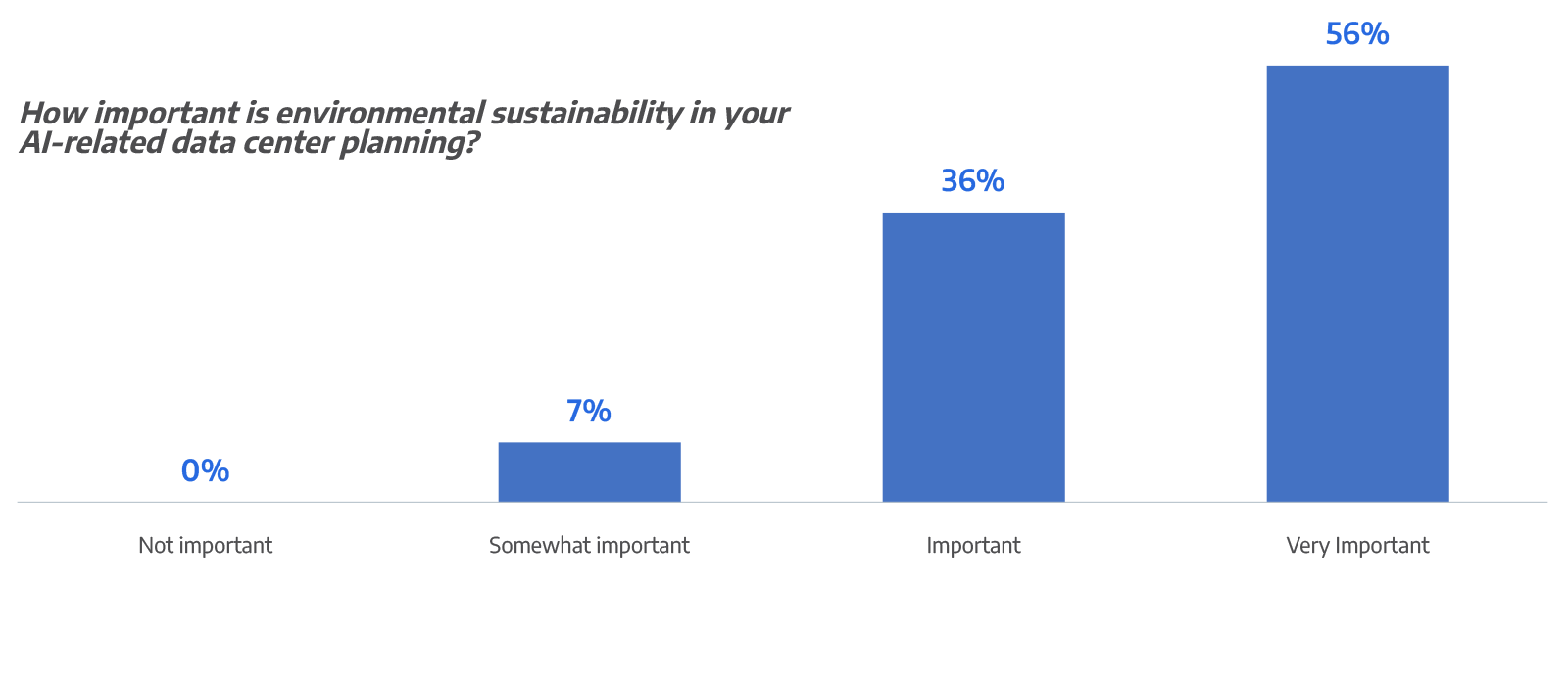

Sustainability is a major consideration in AI-related planning, with 93% of respondents indicating it plays a significant role in their decision-making. Yet only one-third report having a fully integrated sustainability strategy to manage increased power usage from AI workloads. The gap between intention and execution highlights the need for coordinated infrastructure planning.

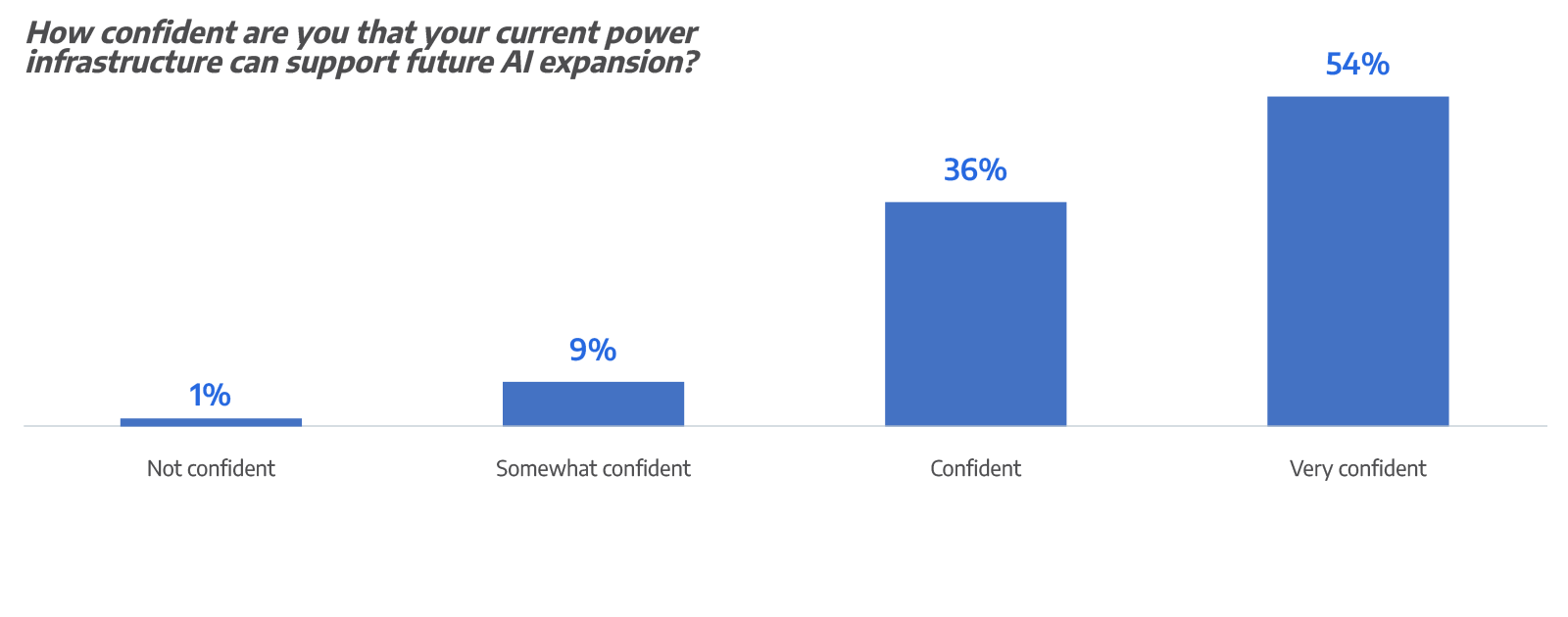

Most Leaders Feel Ready—but Maturity Drives Confidence

As AI deployments grow, many teams are asking the same question: can existing power infrastructure keep up?Just over half of respondents (54%) say they are very confident their current power infrastructure can support future AI expansion, while more than one-third (36%) describe themselves as confident. Confidence is higher among organizations with more mature AI deployments, reflecting that readiness tends to improve as teams gain experience and invest in power and cooling capacity.

But confidence shouldn’t be treated as proof of readiness. With 77% anticipating a power upgrade within two years and budget and cooling constraints cited most often, many organizations still have to make power and cooling upgrades soon before they can scale AI smoothly.

Proactive Planning Is Essential

AI’s impact on data center power demand is both significant and already underway. Nearly all enterprises (97%) have AI in production, and most (87%) say it’s already moderately or significantly increasing energy consumption. Looking ahead, more than three-quarters of respondents expect to make infrastructure upgrades within the next two years.The path forward isn’t just about adding capacity; it also means working through real constraints—budget limits, cooling requirements, regulatory pressure, and rising expectations to manage sustainability alongside growth.

Addressing these pressures requires more than incremental adjustments. It requires forward-looking infrastructure strategy grounded in accurate forecasting, cross-functional coordination, and scalable design. Organizations that take a proactive, holistic approach to modernizing power infrastructure will be better positioned to support AI workloads securely, efficiently, and sustainably.

This research shows how quickly the AI conversation is becoming a power conversation. Most organizations expect they’ll need to upgrade data center power infrastructure within the next couple of years to support AI workloads, which aligns with accelerating customer investment in UPS and power infrastructure for the next wave of AI.

Turn Insight into Action

AI-driven power demand is already reshaping infrastructure planning. The next step is understanding what it means for your environment.If you’re evaluating how to scale power capacity to support AI workloads, Connection can help you assess your current state, identify infrastructure gaps, and develop a forward-looking modernization plan aligned to performance, efficiency, and sustainability goals.

To explore the complete findings and benchmark your organization against industry peers, read the full 2026 Foundry MarketPulse Report.